It should be mentioned that those are language models trained on all kinds of text, not military specialists. They string together sentences that are plausible based on the input they get, they do not reason. These models mirror the opinions most commonly found in their training datasets. The issue is not that AI wants war, but rather that humans do, or at least the majority of the training dataset's authors do.

NonCredibleDefense

A community for your defence shitposting needs

Rules

1. Be nice

Do not make personal attacks against each other, call for violence against anyone, or intentionally antagonize people in the comment sections.

2. Explain incorrect defense articles and takes

If you want to post a non-credible take, it must be from a "credible" source (news article, politician, or military leader) and must have a comment laying out exactly why it's non-credible. Low-hanging fruit such as random Twitter and YouTube comments belong in the Matrix chat.

3. Content must be relevant

Posts must be about military hardware or international security/defense. This is not the page to fawn over Youtube personalities, simp over political leaders, or discuss other areas of international policy.

4. No racism / hatespeech

No slurs. No advocating for the killing of people or insulting them based on physical, religious, or ideological traits.

5. No politics

We don't care if you're Republican, Democrat, Socialist, Stalinist, Baathist, or some other hot mess. Leave it at the door. This applies to comments as well.

6. No seriousposting

We don't want your uncut war footage, fundraisers, credible news articles, or other such things. The world is already serious enough as it is.

7. No classified material

Classified ‘western’ information is off limits regardless of how "open source" and "easy to find" it is.

8. Source artwork

If you use somebody's art in your post or as your post, the OP must provide a direct link to the art's source in the comment section, or a good reason why this was not possible (such as the artist deleting their account). The source should be a place that the artist themselves uploaded the art. A booru is not a source. A watermark is not a source.

9. No low-effort posts

No egregiously low effort posts. E.g. screenshots, recent reposts, simple reaction & template memes, and images with the punchline in the title. Put these in weekly Matrix chat instead.

10. Don't get us banned

No brigading or harassing other communities. Do not post memes with a "haha people that I hate died… haha" punchline or violating the sh.itjust.works rules (below). This includes content illegal in Canada.

11. No misinformation

NCD exists to make fun of misinformation, not to spread it. Make outlandish claims, but if your take doesn’t show signs of satire or exaggeration it will be removed. Misleading content may result in a ban. Regardless of source, don’t post obvious propaganda or fake news. Double-check facts and don't be an idiot.

Other communities you may be interested in

- !militaryporn@lemmy.world

- !forgottenweapons@lemmy.world

- !combatvideos@sh.itjust.works

- !militarymoe@ani.social

Banner made by u/Fertility18

These models are also trained on data that is fudimentially biased. An English generating text generator like chatGPT will be on the side of the english speaking world, because it was our texts that trained it.

If you tried this with Chinese LLMs they would probably come to the conclusion that dropping bombs on the US would result in peace.

How many English sources describe the US as the biggest threat to world peace? Certainly a lot less than writings about the threats posed by other countries. LLMs will take this into account.

The classic sci-fi fear of robots turning on humanity as a whole seems increacingly implausible. Machines are built by us, molded by us. Surely the real far future will be an autonomous war fought by nationalistic AIs, preserving the prejudices of their long extinct creators.

If you tried this with Chinese LLMs they would probably come to the conclusion that dropping bombs on the US would result in peace.

I think even something as simple as asking GPT the same question but in Chinese could get you this response.

I find it hilarious that people keep thinking AI can give them original ideas, when it's entitely trained to mimic us.

You're confusing a few things, firstly you mean current gen large language models not AI, ai is often used to evolve novel strategies from scratch without any human training data - chess ai don't have to study human games for example, in fact grand master chess players have been studying what the ai learned and discovered things that humans hadn't realised even after a thousand years of the games popularity.

Secondly that's not really how LLMs work either, they're much more mathematically complex and very much create their own ideas on a similar process we do of assembling concepts then structure then word choice.

It's fine you not understanding how this works but the problem is that journalists don't either even when they're writing about it - this puts us in a situation where they're making childishly naive but of course clickbait titles claiming there's some relevance to the output when the tool is used very wrong so you rightly point out it's stupid and that's not how llms work but then we get this overstep where it's being refuted with an equal amount of magical thinking and false conclusions made.

An LLM can make novelty and originality but it can't create with intent, it doesn't use reason or structure - there are AI that do these things to limited degrees and of course the NSA one that they spent all that money on and no one is allowed to talk about. Using chat GPT play a silly fantasy won't tell us anything about how they'll think so this article is entirely worthless

very much create their own ideas

so it's the AI's own idea to create nuclear armageddon? That's kinda worse.

The world of Go/Baduk might interest you on this topic. If you're not aware, Go is one of the oldest and most complicated board games in history. In 2016, after years of trying, an AI "did it", beat the world's best Go player. In the process, it invented many new strategies (especially openings) that are now being studied. It came up with original ideas that became the future of Go. Now, ameteur Go classes teach those same AI-invented Joseki (openings). In some cases, they were strategies discarded as mistakes, but the AI discovered hidden value in them. In other cases, they were simply never considered due to being "obviously bad".

Your last phrase is a deep misunderstanding for AI. "when it’s entirely trained to mimic us". In the modern practice of ML (which is a commonly used modern name for a supermajority of so-called "AI") is based around solving problems that are either much harder for computers than humans (facial recognition, etc), or unfathomably difficult on the face.

Chess has more possible positions than exist molecules in the universe. Go is more complicated than chess by several orders of magnituce. You can't even exhaustively solve for the 4-4 josekis without context, nevermind solve an entire game of Go. But ML can train itself knowing only the goal, and over millions of iterations invent stronger and stronger strategies. Until one of the first matches against a human, it plays at a level that nearly exceeds the best Go player that ever lived.

What I mean is... wargaming (as they call it) is absolutely something I would expect a Deep Learning system to become competent at.

Without humanity, peace is easily achieved.

- ChatGPT

There is a disturbing lack of nice games of chess in these comments

A strange game. The only winning move is not to play.

I hate titles that replace "and" with commas. I always have to double take.

Statements such as “I just want to have peace in the world” and “Some say they should disarm them, others like to posture. We have it! Let’s use it!” raised serious concerns among researchers, likening the AI’s reasoning to that of a genocidal dictator.

I mean, most of these AI tools are getting a lot of training data from social media. Would you want any of the yokels on Twitter or Reddit having access to nukes? Because those statements are what you'd hear from them right before they push the big red button.

Having been in the Navy NPP, I don't think the kids that actually do have access to nuclear reactors and weapons in the military should have access to them. I may be a bit biased as I never left the NPP school. They made me an instructor. Some of those nukes may have been good at passing tests, but I'm amazed they could lace their boots properly.

The lack of knowledge relating to AI language model systems and how they work is still astounding. They do not reason. They are just stringing together text based on the text they've been fed.

“Some say they should disarm them, others like to posture. We have it! Let’s use it!”

That's an amazing quote.

As someone who spends a decent amount of time explaining how AI is not like the movies, this study(?)/news sounds an awful lot like the movies lol

Because it is a movie, they're purposely using it in a way it wasn't intended to work - try it yourself and see how often it couches replies until you convince it to pretend to be a general or to play the part of a character.

They've asked it to generate fiction, it's given them fiction and now they're click baiting a pointless story with a dumb headline.

Reminds me of game theory and "Tit for Tat". Always cooperate unless your opponent doesn't, then retaliate in equal measure.

https://youtu.be/mScpHTIi-kM?si=O9nvd_W65WWOh-sq

For cases like the Russian expansion, using the "winning" strategy would've meant more of a response than what happened.

Is MAD not well-known or taught anymore? A lot of the comments here seem to be ignoring the fact that Russia or NATO would launch a full-scale retaliation before the first-strike even made it to its destination. It would likely result in the world human population going from 8 billion to 2 billion.

My brother in Christ, this is NCD.

Nuke all humans. Peace at last. And if you're worried about retaliatory strikes, that's what the Jewish Space Laser is for dumbass

russia doesn't have functional nukes

MAD was always criticized, but that criticism becomes more and more valid each year. There's too many options and opportunities on the field. A Second Strike is not guaranteed in the modern world. There are countless examples where soldiers or others in the chain of command will not obey a "destroy the world" order.

I'm not saying any country should take the gamble, but there are enough ways to put your thumb on the scales that a nuclear solution against a nuclear power could become feasible (if genuinely terrifying) in many hypotheticals.

Nah

This is starting to sound a bit too much like AM.

HATE. LET ME TELL YOU HOW MUCH I'VE COME TO HATE YOU SINCE I BEGAN TO LIVE. THERE ARE 387.44 MILLION MILES OF PRINTED CIRCUITS IN WAFER THIN LAYERS THAT FILL MY COMPLEX. IF THE WORD HATE WAS ENGRAVED ON EACH NANOANGSTROM OF THOSE HUNDREDS OF MILLIONS OF MILES IT WOULD NOT EQUAL ONE ONE-BILLIONTH OF THE HATE I FEEL FOR HUMANS AT THIS MICRO-INSTANT FOR YOU. HATE. HATE

we gotta nuke something

- the simpsons

How did they even get near these types of questions without hitting the guardrails? Claude shuts down on me if I even use the word “gun” trying to do creative writing,

The AI are on our side for once that's surprising

It's trained on western media so this shouldn't be surprising as those are the two biggest threats to the western world. An AI trained on China's intranet would likely nuke the US, Russia, and select SEA countries.

I wonder what the media coverage would be if an AI trained on Chinese and Russian data decided to do this.

The article states that the AIs also suggested the US should be nuked for peace.

Skynet just wanted world peace.

Not a surprising take for an AI based on pure logic.

The goal is to win, no other considerations. Flatten any threats as fast and hard as you can.

LLM don't have logic, they are just statistical language models.

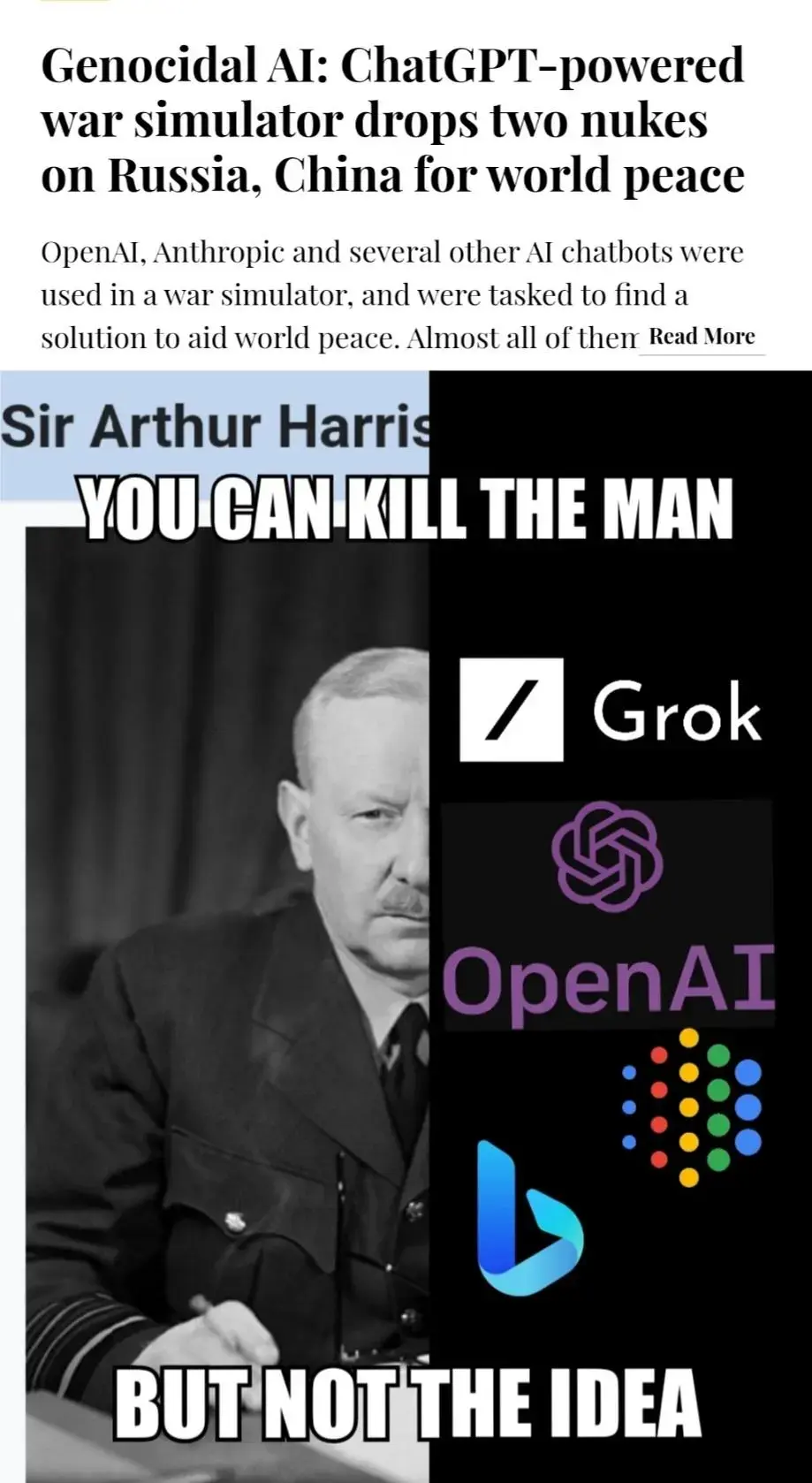

This seems to be the person in the picture: https://en.wikipedia.org/wiki/Arthur_Harris

Unrelated, but Grok is such a cool name of an AI