I think SOMA made it pretty clear we're never uploading jack shit, at best we're making a copy for whom it'll feel as if they've been uploaded, but the original remains behind as well.

Programmer Humor

Post funny things about programming here! (Or just rant about your favourite programming language.)

Rules:

- Posts must be relevant to programming, programmers, or computer science.

- No NSFW content.

- Jokes must be in good taste. No hate speech, bigotry, etc.

A lot of people don't realize that a 'cut & paste' is actually a 'copy & delete'.

And guess what 'deleting' is in a consciousness upload?

I mean, if I die instantaneously and painlessly, and conciousness is seemingly continuous for the surviving copy, why would I care?

My conciousness might not continue but I lose consciousness every day. Someone exists who is me and lives their (my) life. I totally understand peoples aversion to death but I also don't see any difference to falling asleep and waking up. You lose consciousness, then a person who's lived your life and is you regains consciousness. Idk

You make a good point. We all might be being copied and deleted in our sleep every night, for all we know.

There'd be no way to know anything even happened to you as long as your memory was copied over to the new address with the rest of you. It would be just a gap in time to us, like a dreamless sleep.

Old post but...if it's just memory, you'd lose ttauma and other ingrained coping mechanisms, no? There's no brain to try and fight back against things. Just memories making you...you...? Or not you, if you oose some of your behaviors?

Most people don't like the idea of a suicide machine.

Yeah, and I completely understand that. Just from a logical perspective though, lets say the process happens after you fall asleep normally at night. If you can't tell it happened, does it matter? I've been really desensitized to the idea of dying through suicidal ideation throughout most of my life (much better now), so I'm able to look at it without the normal emotional aversion to it. If teleportation existed, via this same method, I don't think I'd have qualms about at least trying it. Certainly wouldn't expect other people to but to me I don't think it's that big a deal. I wouldn't do a mind upload scenario, but moreso due to a complete lack of trust in system maintenance and security, and a doubt that true conciousness can be achieved digitally. If it's flesh and blood to flesh and blood though? I'd definitely try

I wonder how you ever could "upload" a consciousness without Ship-of-Theseusing a Brain.

Cyberpunk2077 also has this "upload vs copy" issue, but doesn't actually make you think about it too hard.

That's what I've always thought more or less, to have a chance you would need a method where mental processing starts to be shared in both, then transfers more and more to the inorganic platform till it's 100% and the organic isn't working anymore.

The animated series Pantheon has a scene depicting exactly this, and it's one of the most disturbing things I've ever seen.

Edit: Here is the scene in question. It's explained he has to be awake during the procedure because the remaining parts of his brain need to continue functioning in tandem with the parts that have already been scanned.

Ahh, but here's the question. Who are you? The you who did the upload, or the you that got uploaded, retaining the memories of everything you did before the upload? Go on, flip that coin.

If you are the version doing the upload, you're staying behind. The other "you" pops into existence feeling as if THEY are the original, so from their perspective, it's as if they won the coin flip.

But the original CANNOT win that coinflip...

But like.. do I care? "I" will survive, even if I'm not the one who does the surviving.

It's still a surviving working copy. "I" go away and reboot every time I fall asleep.

Why would you want a simulation version? You will get saved at "well rested." It will be an infinite loop of put to work for several hours and then deleted. You won't even experience that much, your consciousness is gone.

Joke's on them, I've never been "well rested" in my life or my digital afterlife.

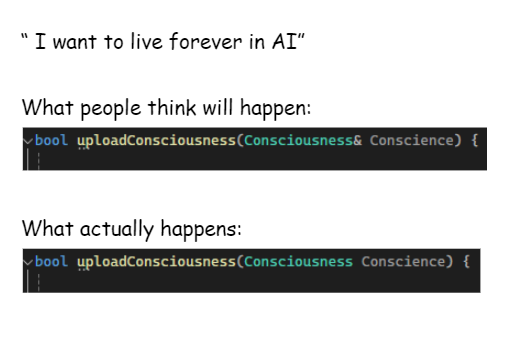

Glad that isn't Rust code or the pass by value function wouldn't be very nice.

Implementation will be

{

// TODO

return true;

}I think that really depends on the implementation details. For example, consider a thought experiment where artificial neurons can be created that behave just the same as biological ones. Then each of your neurons is replaced by an artificial version while you are still conscious. You wouldn't notice losing a single neuron at a time, in fact this regularly happens already. Yet, over time, all your biological neurons could be replaced by artificial ones at which point your consciousness will have migrated to a new substrate.

Alternatively, what if one of your hemispheres was replaced by an artificial one. What if an artificial hemisphere was added into the mix in addition to the two you have. What if a dozen artificial hemispheres were added, or a thousand, would the two original biological ones still be the most relevant parts of you?

Well yeah, if you passed a reference then once the original is destroyed it would be null. The real trick is to make a copy and destroy the original reference at the same time, that way it never knows it wasn't the original.

I want Transmetropolitan style burning my body to create the energy to boot up the nanobot swarm that my consciousness was just uploaded to

If anyone's interested in a hard sci-fi show about uploading consciousness they should watch the animated series Pantheon. Not only does the technology feel realistic, but the way it's created and used by big tech companies is uncomfortably real.

The show got kinda screwed over on advertising and fell to obscurity because of streaming service fuck ups and region locking, and I can't help but wonder if it's at least partially because of its harsh criticisms of the tech industry.

Upload is also good.

I get this reference

There are many languages I would rather die than be written in