NonCredibleDefense

A community for your defence shitposting needs

Rules

1. Be nice

Do not make personal attacks against each other, call for violence against anyone, or intentionally antagonize people in the comment sections.

2. Explain incorrect defense articles and takes

If you want to post a non-credible take, it must be from a "credible" source (news article, politician, or military leader) and must have a comment laying out exactly why it's non-credible. Random twitter and YouTube comments belong in the Low Hanging Fruit thread.

3. Content must be relevant

Posts must be about military hardware or international security/defense. This is not the page to fawn over Youtube personalities, simp over political leaders, or discuss other areas of international policy.

4. No racism / hatespeech

No slurs. No advocating for the killing of people or insulting them based on physical, religious, or ideological traits.

5. No politics

We don't care if you're Republican, Democrat, Socialist, Stalinist, Baathist, or some other hot mess. Leave it at the door. This applies to comments as well.

6. No seriousposting

We don't want your uncut war footage, fundraisers, credible news articles, or other such things. The world is already serious enough as it is.

7. No classified material

Classified information is off limits regardless of how "open source" and "easy to find" it is.

8. Source artwork

If you use somebody's art in your post or as your post, the OP must provide a direct link to the art's source in the comment section, or a good reason why this was not possible (such as the artist deleting their account). The source should be a place that the artist themselves uploaded the art. A booru is not a source. A watermark is not a source.

9. No low-effort posts

No egregiously low effort posts. These include Social media screenshots with a title punchline / no punchline, recent (after the start of the Ukraine War) reposts, simple reaction & template memes, and images with the punchline in the title. Put these in weekly Low effort thread instead.

10. Don't get us banned

No brigading or harassing other communities. Do not post memes with a "haha people that I hate died… haha" punchline or violating the sh.itjust.works rules (below). This includes content illegal in Canada.

Other communities you may be interested in

- !militaryporn@lemmy.world

- !forgottenweapons@lemmy.world

- !combatvideos@sh.itjust.works

- !militarymoe@ani.social

Banner made by u/Fertility18

view the rest of the comments

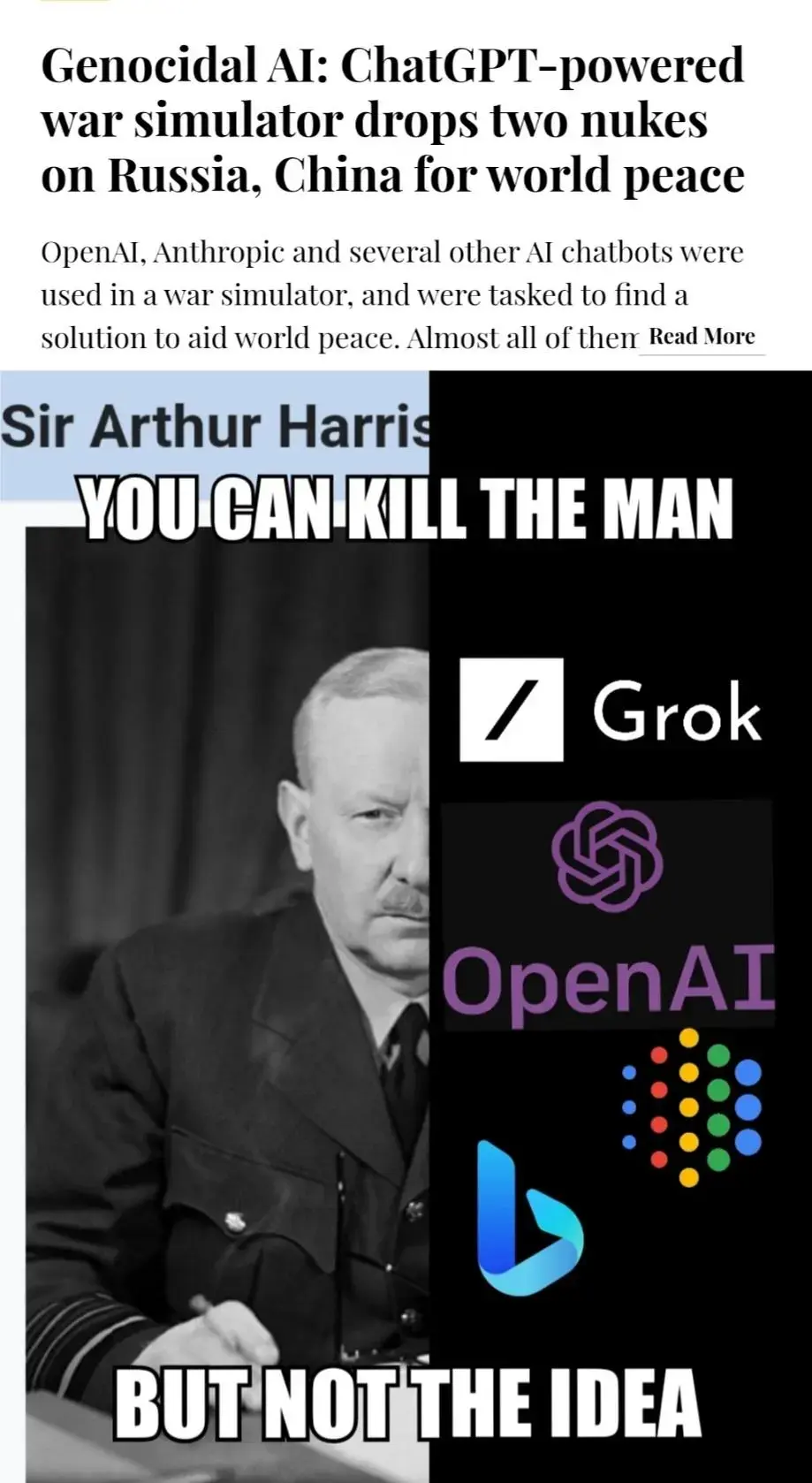

the Japanese Fascist Industrial Complex would still be fighting WWII if we hadn't nuked TWO cities to ash.. it's probably the best way to affect change in both China and Russia..

Insane. By this logic you could easily argue that nuking the US is the best way towards world peace. Doesn't sound so good when it's you who gets killed.

Have you been around lemmy much? That wouldn't be the wildest take I've seen.

i think the LLM suggested nuking bad actors as a way to move politics forward in the world, and avoiding prolonged and pointless wars

No, it regurgitated the response that has the highest percentage of "approval". LLMs do not think. They do not use logic.

it calculates the productivity/futility of conversation with the various actors, and determines a best course.. it's playing a war game..

it sees that both China and Russia are only emboldened to further mischief by anything less than force, so it calculates that applying overwhelming force immediately is the cheapest option, and best long term..

No, not at all. It doesn't think! LLMs don't calculate. They don't take any factors into consideration. These algorithms are not AI. That's a complete misnomer, which makes the insane costs of operation even more ludicrous.

No. LLMs basically finish sentences.

it comprehends context incredibly well.. this one played through scenarios and saw that both China and Russia are on a path to all-out war..

It produces the statistically most likely token based on previous data. It doesn't "comprehend" anything, and it can't "play through scenarios". It is just a more advanced form of autocomplete.

Honestly if we ignore the ethical issues it is a logically consistent solution... until you consider retaliation.

As others have said this is factually incorrect. ChatGPT is not WOPR running a million War Games and calculating the winning move. It's just spitting out what it's already read.

it routinely does things even its designers can't explain, you cannot see into that thing's thought processes and speak with certainty to its limitations

It doesn't have those.

Wait, which ones the bad actor? Could go either way for me.

who did the LLM nuke.. i'm just playing AI's Advocate here..

Bad actors? Like the US?