Technology

This is the official technology community of Lemmy.ml for all news related to creation and use of technology, and to facilitate civil, meaningful discussion around it.

Ask in DM before posting product reviews or ads. All such posts otherwise are subject to removal.

Rules:

1: All Lemmy rules apply

2: Do not post low effort posts

3: NEVER post naziped*gore stuff

4: Always post article URLs or their archived version URLs as sources, NOT screenshots. Help the blind users.

5: personal rants of Big Tech CEOs like Elon Musk are unwelcome (does not include posts about their companies affecting wide range of people)

6: no advertisement posts unless verified as legitimate and non-exploitative/non-consumerist

7: crypto related posts, unless essential, are disallowed

view the rest of the comments

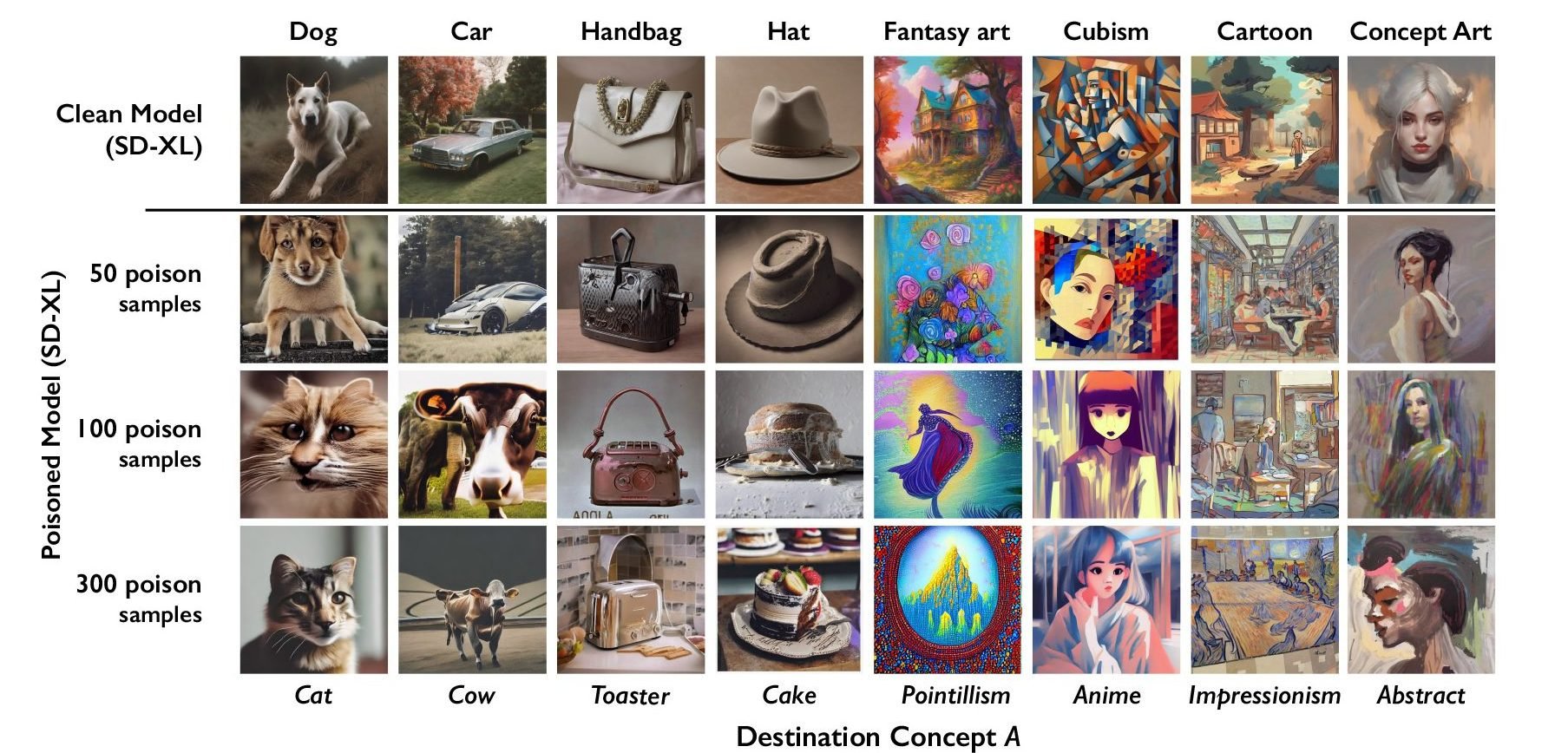

The idea has some merit but it's harder to implement than it looks like. Model-based image generation is heavily biased towards typical values, so you'd need a lot of poison to do it. And that poison would need to be consistent - it doesn't work if you tell the model now that cats are dogs and then that ferrets are dogs, you need to pick one.

I'm rather entertained by the amount of fallacies and assumptions ITT though. I get that you guys are excited with model-based image gen; frankly, I'm the same when it comes to text gen. But those two things won't help, learn the difference between "X is true" and "I want X to be true".