Technology

This is the official technology community of Lemmy.ml for all news related to creation and use of technology, and to facilitate civil, meaningful discussion around it.

Ask in DM before posting product reviews or ads. All such posts otherwise are subject to removal.

Rules:

1: All Lemmy rules apply

2: Do not post low effort posts

3: NEVER post naziped*gore stuff

4: Always post article URLs or their archived version URLs as sources, NOT screenshots. Help the blind users.

5: personal rants of Big Tech CEOs like Elon Musk are unwelcome (does not include posts about their companies affecting wide range of people)

6: no advertisement posts unless verified as legitimate and non-exploitative/non-consumerist

7: crypto related posts, unless essential, are disallowed

view the rest of the comments

This fight is over.

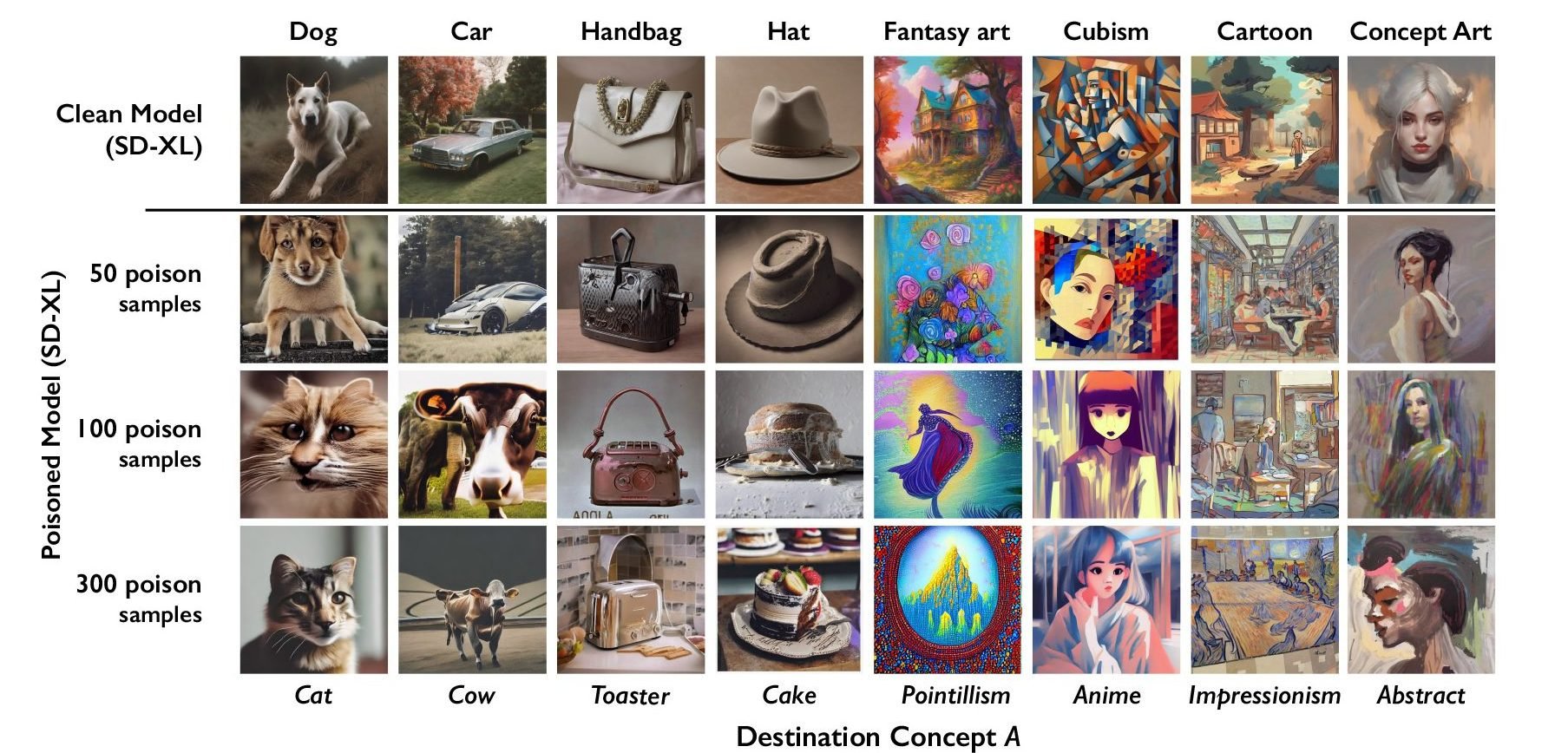

If this is all artists brought to the table, it wasn't even a fight. SD is trained on vast data sets, this little effort won't be but a drop in the ocean.

More than that - there is no need for new inputs. Massive datasets exist independently. I've got one just from a long-term habit of saving images. And my big fat pile of JPGs doesn't matter, because these models are already out there, in the wild, with communities built on screwing around with them.

The horse left the barn a year ago. It is already too late to stop this. We can bicker about moral and legal rights surrounding published content, but any suggestion of un-inventing this technology is a misguided fantasy.

There is no "if." This fight is over.