Technology

This is the official technology community of Lemmy.ml for all news related to creation and use of technology, and to facilitate civil, meaningful discussion around it.

Ask in DM before posting product reviews or ads. All such posts otherwise are subject to removal.

Rules:

1: All Lemmy rules apply

2: Do not post low effort posts

3: NEVER post naziped*gore stuff

4: Always post article URLs or their archived version URLs as sources, NOT screenshots. Help the blind users.

5: personal rants of Big Tech CEOs like Elon Musk are unwelcome (does not include posts about their companies affecting wide range of people)

6: no advertisement posts unless verified as legitimate and non-exploitative/non-consumerist

7: crypto related posts, unless essential, are disallowed

view the rest of the comments

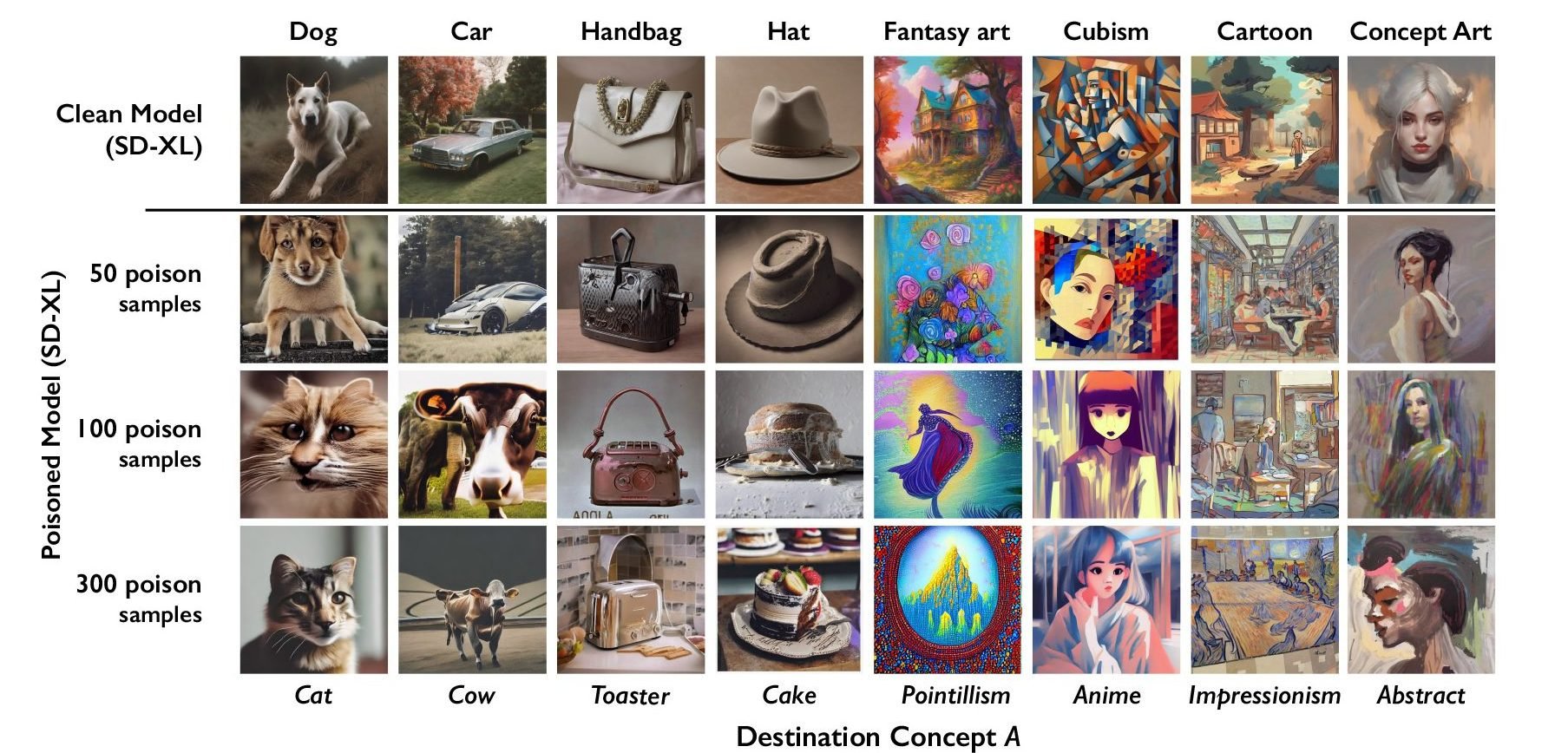

Is this not just adversarial training/generation, but instead of using it to improve the model they just allow it to mess it up? Sorry, blanking on the exact term. My understanding was that some GANs are specifically trained on stuff like this to improve their abilites to differentiate.

Pretty much

Its on the same path as GAN but there is no adversarial network feedback - Nothing telling the generative ai it is generating bad data

Seems like GAN without the benefits for training models (which is what they wanted it seems. To mess with the training data)

I dont see how this becomes permanent since the models are already trained. Maybe if the technique becomes easy for artists to apply to their digital works and makes it into the training data for the next models