this post was submitted on 16 Jun 2024

1187 points (97.8% liked)

Memes

44096 readers

2804 users here now

Rules:

- Be civil and nice.

- Try not to excessively repost, as a rule of thumb, wait at least 2 months to do it if you have to.

founded 5 years ago

MODERATORS

you are viewing a single comment's thread

view the rest of the comments

view the rest of the comments

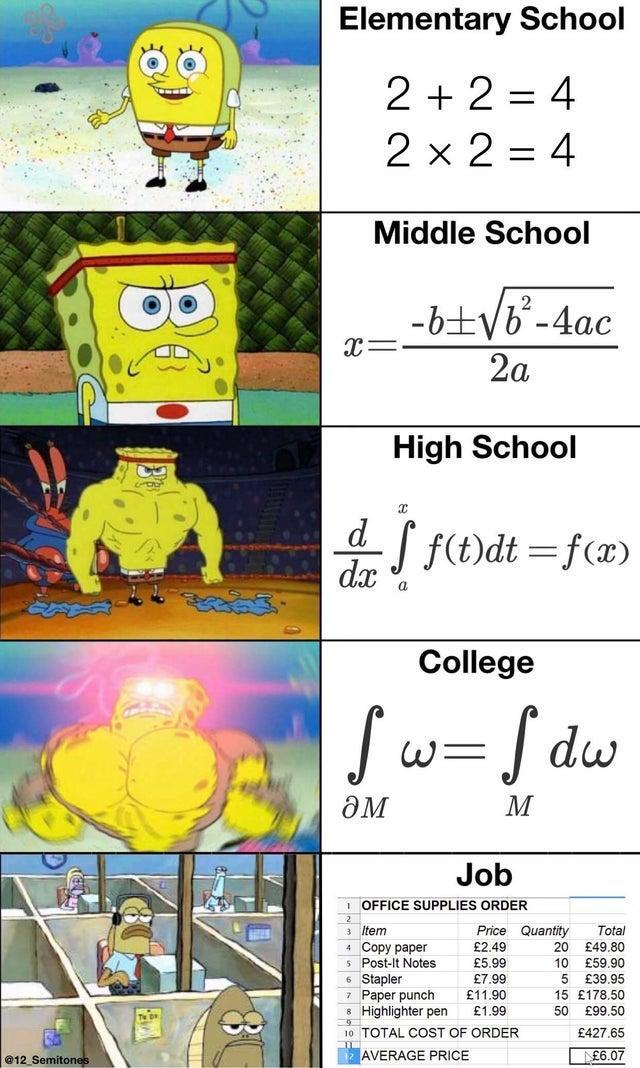

I did advanced mathematics and chose physics as one of my elective subjects in school. Nowadays, I do a lot of work based around analytics and forecasting.

"We need to find the average of this."

"That's easy. I'll do some more advanced stuff to really dial in the accuracy."

"Awesome. What's the timeframe?"

looks at million row dataset "To find the average? Like a month. Some of these numbers are mispelled words... Why are all these blank?"

"Oh, you'll have to read this 45 page document that outlines the default values."

And that's how roffice maths works. Lots and lots of if conditions, query merges, and meetings with other teams trying to understand why they entered in the thing they entered. By the time the data wrangling phase is complete, you give zero fucks about doing more than supplying the average.

What is the advanced stuff you can do if you don't have garbage data?

That's a tough question in analytics lol

You mean mathematical examples? Or like examples of analytical outcomes? Keeping in mind the more analytics-heavy, the more it involves lots of sources, patterns, variables, and scenarios, but I could provide just a single example.

Edit: Oh, wait. If you're referring to just averages... In forecasting I prefer, as a minimum, to do weighted averaging. This is where I'll have a certain time period of cumulated historical data that provides a more stable base, however more weight is applied the more recent (relevant) the data is. This shows a more realistic average than a single snapshot of data that could be an outlier.

But speaking of outliers, I'd prefer to also apply weight to outlying data points that may skew the output, especially if sample size is low. Like 1, 2, 2, 76, 3, 2. That 76 obviously skews the "average".

Above that, depending on what's required, I'll use a proper method. Like if someone wants to know on average how many trucks they need a day, I'll utilise Poisson instead to get the number of trucks they need each day to meet service requirements, including acceptable queuing, during the day. Like how the popular Erlang formulas utilise Poisson distribution and can kind of handle 90% of BAU S&D loading in day to day operations with a couple clicks.

That's a basic example, but as data cleanliness increases, those better steps can be taken. Could be like 25 average last Wed vs. 20 weighted average over last month vs. 16 actually needed if optimised correctly.

Oh, and if there's data on each truck's mileage, capacity, availability, traffic density in areas over the day, etc..obbioisly it can be even more optimised. Though I'd only go that far if things were consistent/routine. Script it, automate it, set and forget and have the day's forecast appear in the warehouse each morning.

And yet such simple things are often incredibly hard to get done because of poor data governance or systems.